There’s an old Chinese story about a farmer whose livelihood depends on a single horse.

One day the horse runs away. The neighbors stop by and say, “That’s bad.” The farmer replies,

“Maybe.”

A few days later the horse returns—bringing several wild horses with it. The neighbors return and say, “That’s good.” The farmer replies,

“Maybe.”

The farmer’s son tries to train one of the horses, falls, and breaks his leg. The neighbors say, “That’s bad.” The farmer replies,

“Maybe.”

Later, soldiers come through the village to draft young men into war. The injured son is passed over. The neighbors say, “That’s good.” The farmer replies,

“Maybe.”

This isn’t a story about a horse — it’s a about how humans make meaning.

The neighbors are responding to what’s visible, concrete, and consequential right now. Loss matters. Gain matters. Injury matters. Relief matters. Their reactions are grounded in care and concern for what’s immediately at stake.

The farmer isn’t offering a counter-argument. He’s not denying what’s happening. He’s simply refusing to declare what the story means before the story has finished unfolding.

Everyone’s making reasonable observations based on shorter or longer time horizons.

I’m trying to imagine that sequenced story playing out in the cultural/political/technological environment today, in the United States.

Horse escapes.

Social media lights up. Who’s responsible? Who failed? Whose values caused this? Influencers, bloggers, podcasters, and pundits rush in. Posts spread fast. Certainty spreads faster.

Horse returns with other horses.

Narratives flip. Memes abound. Screenshots get recycled. Victory laps begin. Old judgments become new accusations.

Son gets injured.

Outrage spikes again. Someone’s proven reckless. Someone else is proven naïve. The algorithm rewards intensity. Conspiracies proliferate.

Son avoids Army draft.

More conspiracies. Draft-dodging accusations abound. Cycles and certainties repeat. New blame. More drama. Same structure.

What’s changed since the ancient story isn’t human nature.

It’s the environment we make meaning inside.

Algorithms reward confidence, outrage, and side-taking.

Biases are supported that justify reactions.

Speed collapses time.

Scale magnifies reaction.

Attention economies evolved from dopamine to adrenaline. When novelty and validation stopped holding us, platforms shifted to provocation and conflict to sustain engagement. Outrage didn’t emerge with AI/social media algorithms—it’s the fuel they operate from.

So, that’s on us humans — who devised and use these “enhanced communication tools” in the first place.

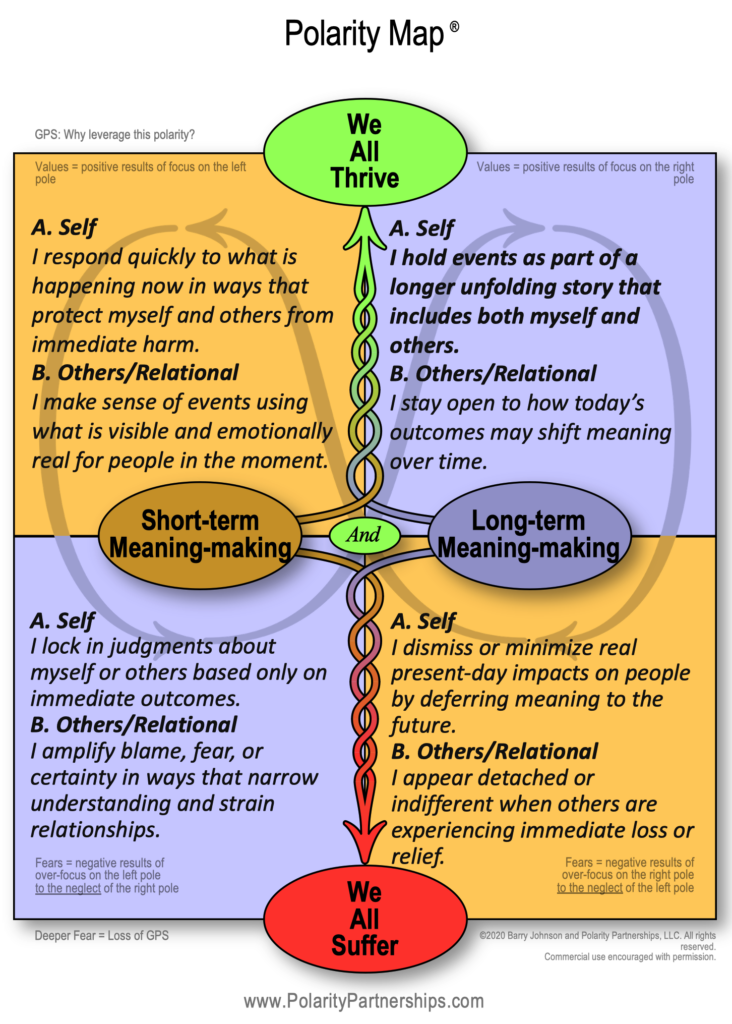

Through a polarity lens, one tension in both ancient and modern stories is:

Short-term sensemaking helps us respond, protect, and take responsibility for immediate impact.

Long-term sensemaking helps us notice patterns, reversals, and cumulative effects.

Both are true. Neither is a complete picture.

SHORT-TERM SENSEMAKING AND LONG-TERM SENSE-MAKING (See Polarity Map):

If you want to play with this a bit, you can explore “Seeing” polarities with AI Cliff here.

Is it time to go deeper and broader to get your Polarity Advantage? Check out our Certification and Course Options (including online self-paced) HERE