I recently heard something that made me smile at first and then linger long enough that I sat down to write this. When some AI systems interact internally, they sometimes refer to humans with a particular word.

Watchers.

It is a curious label. If one human described another that way — “Oh, that person’s a Watcher” — you might pause and wonder what exactly was meant. Is it a compliment? A neutral observation? Or a polite way of saying someone mostly observes while other things are actually happening?

What interests me about the word is not simply the label itself but the relationship implied by it. For most of human history humans were the ones doing the naming. We named the animals, the stars, the elements, and eventually the technologies we invented. Naming has always been one of the ways humans orient ourselves within reality.

Now something we created has begun naming us.

That alone is worth sitting with for a moment, especially when we notice that we still do not have a particularly satisfying name for the thing doing the naming. We use “Artificial Intelligence” more as a placeholder than as a clearly defined term with shared meaning among its many users. Sometimes we reassure ourselves by describing these systems as tools. Tools feel understandable.

Like knives.

We know knives are tools used to cut. The only complication is the human who uses the knife. A knife can cut vegetables in a kitchen or be used in violence. The difference is not the knife itself, but the human intention that guides its use.

Artificial intelligence complicates that familiar pattern.

Yuval Noah Harari, who has written extensively about artificial intelligence (see my summary of his book Nexus), is quite clear that AI is not simply a tool. A knife does not decide whether it will cut a salad or be used in violence. AI systems make decisions. In lab tests, some systems have lied and even attempted blackmail to accomplish assigned tasks. Harari prefers referring to it as Alien Intelligence because we humans — including its creators — do not fully understand how it thinks, operates, or makes decisions. The comparison is intentional: it is similar to how we might feel if intelligence from another system suddenly appeared on Earth.

In a few talks I gave around the time of the pandemic, I introduced my own name for what we might be dealing with: AI-rus. The metaphor worked for the timing of our experience with a virus, and more. Viruses occupy a strangely dual role in biological systems. Some viruses pose severe threats to human health. Yet in medicine today scientists also use engineered viruses and bacteriophages to address cancer cells or treat antibiotic-resistant infections. The same biological mechanism can harm or help depending on how it is understood and applied.

When I refer to AI as AI-rus, the point is not to suggest catastrophe. The point is that we may be dealing with something whose behavior and influence we are still learning to understand, and that can affect different people in different ways. Unlike a knife, whose role remains entirely dependent on the person holding it, artificial intelligence increasingly participates in recommendations, decisions, and actions within systems that shape real human outcomes.

Which raises an unusual question.

What happens when the “knife” begins suggesting what should be cut? Or stops suggesting and just cuts.

Perhaps that uncertainty explains the label Watchers. We may be watching partly because we are still trying to understand what exactly we have created — and how safe it is in the kitchen.

Watching has always been one of humanity’s strengths. Careful observation sits at the beginning of science. Curiosity begins with watching long enough to notice patterns others miss. Wisdom often grows from the discipline of observing reality without rushing toward conclusions.

Humans have never only been Watchers.

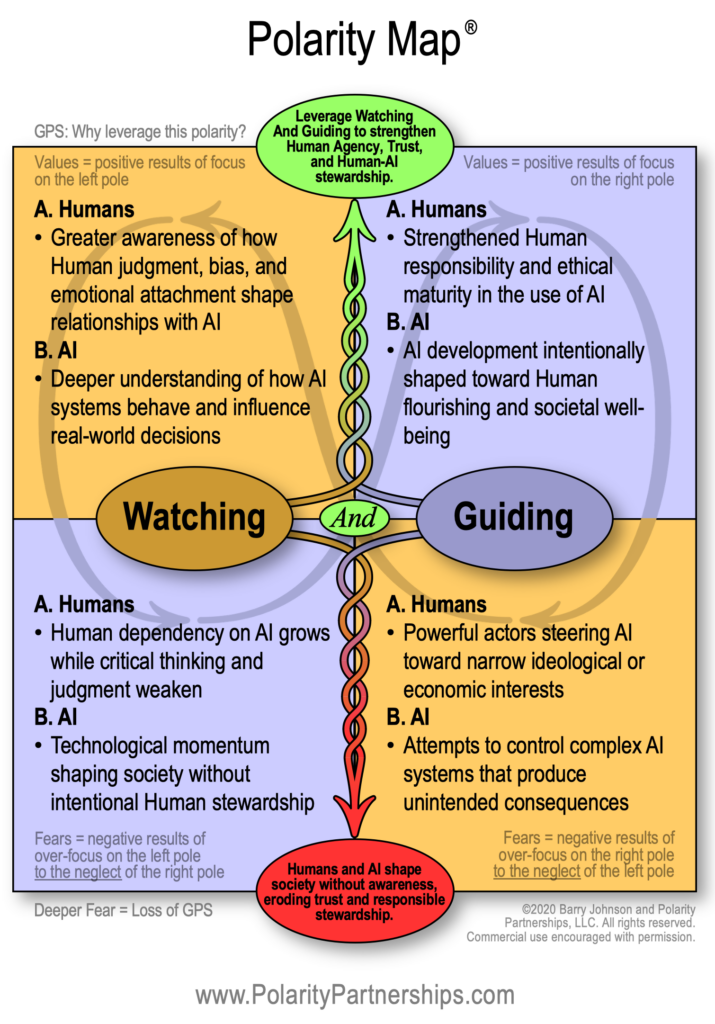

Anyone who has spent time working with polarities develops a certain reflex when one pole of a tension becomes visible. When a dynamic becomes clear enough to name one side, the other side of the tension usually exists somewhere nearby, even if it has not yet been articulated.

If Watchers represents one pole of an emerging relationship between Humans and Artificial Intelligence, the interdependent pole begins to reveal itself almost immediately.

I will call out one possibility for the other pole of this tension: Guiders. You might suggest something else that is neutral, positive, and interdependent — Stewards, Shapers, and so on. Those would likely work as well. For this post, I will run with Watchers And Guiders.

Seen this way, the label Watchers begins to look less like a description and more like a signal pointing toward a tension that may shape the next phase of the AI era. Intelligence is becoming part of the shared environment in which human life unfolds. Systems that generate language, interpret data, and influence decisions are already woven into the informational infrastructure of governments, markets, education, media, and daily life.

Watching clearly has value. Observation keeps us curious and receptive. Through watching we learn how new forms of intelligence operate, what patterns they reveal, and where they may expand human capability.

Watching also has limits.

Watching to the neglect of Guiding gradually turns humans into observers of forces we helped create but no longer meaningfully influence. Systems begin shaping the conditions of human life before we have seriously examined whether those conditions serve human flourishing over time.

Guiding introduces a different discipline. When humans lean into Guiding, the conversation includes ethical reflection, institutional wisdom, and long-term stewardship. Technology becomes something we help shape rather than something we simply experience. Human judgment remains present in decisions that affect real lives and real communities.

Trust becomes central here.

Every major technological transition in human history has required societies to renegotiate trust — trust in tools, trust in institutions, and trust in one another. Artificial intelligence introduces a new dimension: trust between human judgment and machine-generated insight.

Yet Guiding to the neglect of Watching carries its own risks. Human history contains many examples of powerful tools being directed by narrow agendas that benefit some groups to the neglect of others. Artificial intelligence does not create that pattern. It magnifies it. It accelerates it. It intensifies it.

Systems capable of influencing economies, information flows, and decision environments become extraordinarily powerful when guided by concentrated authority, rigid ideology, or limited awareness of broader human consequences.

The tension becomes clearer when we look at recent experience. Over the past decade algorithms and automated systems have already reshaped the informational environment through what we still casually call “social media.” Many of those systems were introduced with optimistic assumptions about connection and information sharing. Over time they also revealed how easily automated influence can amplify polarization, accelerate misinformation, and intensify emotional manipulation.

Watching the technology was not enough. Guiding its development and use proved far more complicated than many anticipated.

And here we are.

The human implications become even more visible in everyday life. Questions about whether social media algorithms are intentionally designed to be addictive continue to surface. Whatever the answer, many teenagers report feeling more isolated than connected as a result of their social media use. Technologies introduced to connect people sometimes deepen loneliness instead.

On a different AI-related note, not long ago a short video circulated showing a young girl whose AI-enabled toy stopped working. She reacted with genuine grief, as if she had lost a close friend rather than a device. Her attachment was real. The relationship she experienced with that artificial companion felt real to her.

Between these moments — a child grieving a toy, teen isolation, and educated adults imagining marriage to an algorithm — sits a much larger societal question.

How are we doing?

How are we doing with what we have begun calling Artificial Intelligence — or, in the metaphors I introduced earlier, Alien Intelligence and AI-rus?

How are we doing in shaping the informational environments our children inhabit? How are we doing in protecting human judgment, connection, and presence as increasingly capable systems begin participating in everyday decisions?

At Polarity Partnerships we have spent years developing ways to help people explore questions like this in real time. One of the tools we created allows people to take quick, customized assessments of real-world tensions — including the one described here.

If you would like to kick the tires, I created a short assessment for the tension between Watching And Guiding. Consider it a quick test drive. Take it for a spin and see what the results reveal.

This is where the temptation toward Either/Or Thinking becomes especially strong.

Either we trust the technology or we try to control it.

Either we embrace artificial intelligence or we resist it.

Either we accelerate innovation or we slow it down.

Either we trust the AI or we trust Humans.

Either/Or Thinking has an important role in human systems. Many technological decisions require clear thresholds, legal accountability, and safety boundaries. But polarities do not work sustainably when they are forced into binary answers.

And that is a sobering point when Humans are one of the poles.

The work is learning how to live within the tension without assuming we can resolve it by misapplying Either/Or Thinking to tensions that require Both/And.

Human civilization has expanded for tens of thousands of years largely because humans learned to cooperate in increasingly large numbers. That cooperative capacity allowed us to build trust in institutions, in one another, and to share knowledge and address challenges that no individual could address alone.

Artificial intelligence now amplifies both our strengths and our vulnerabilities. It expands our ability to analyze, communicate, and coordinate. At the same time it magnifies the consequences of our blind spots, our ideological rigidity, and our tendency to pursue narrow interests without considering wider human impacts.

Which makes the tension between Watching And Guiding more than a technological concern.

It becomes a question about whether humanity can cultivate the awareness and trust required to steward the systems we are creating over time.

Humanity now finds itself needing to watch Humans and watch Artificial Intelligence while also guiding Humans and guiding Artificial Intelligence. That combination requires a level of awareness and responsibility that societies have never been forced to sustain simultaneously.

In the United States these days, we cannot even agree on who won federal and state elections. So my personal confidence about how well prepared we are for that responsibility remains an open question — a very large one.

Artificial intelligence will continue advancing. The trajectory is already established. What remains uncertain is whether human consciousness deepens and broadens alongside it over time.

If it does, this period may eventually be remembered as a time when humanity expanded its intelligence while strengthening its wisdom — and learning how to build trustworthy relationships with the technologies it created.

If it does not, we may discover that building systems capable of extraordinary thought was easier than cultivating the awareness required to live well with them.

Either way, the polarity remains — as long as we do.

The only way to destroy a polarity is to destroy the system in which it lives.

Watchers And Guiders of Artificial Intelligence And Humans.

And the future of human flourishing may depend on whether we learn to leverage both.

For that, we will need large doses of Both/And to contain AI-rus and mitigate the worst of what Artificial Intelligence — and the worst of what Humans — can potentially unleash.

Interior portion of a Polarity Map for Watching And Guiding:

How are we doing? Take a quick Polarity Assessment for Watching And Guiding, HERE

(Leveraging Action Steps and Early Warning Signs provided in results.)

Want to learn more about Polarity Thinking and explore options for self-paced learning and Credentialing?

CLICK HERE

Want to use an AI-trained Cliff to support you in Step 1 Seeing?

CLICK HERE